Why this step?

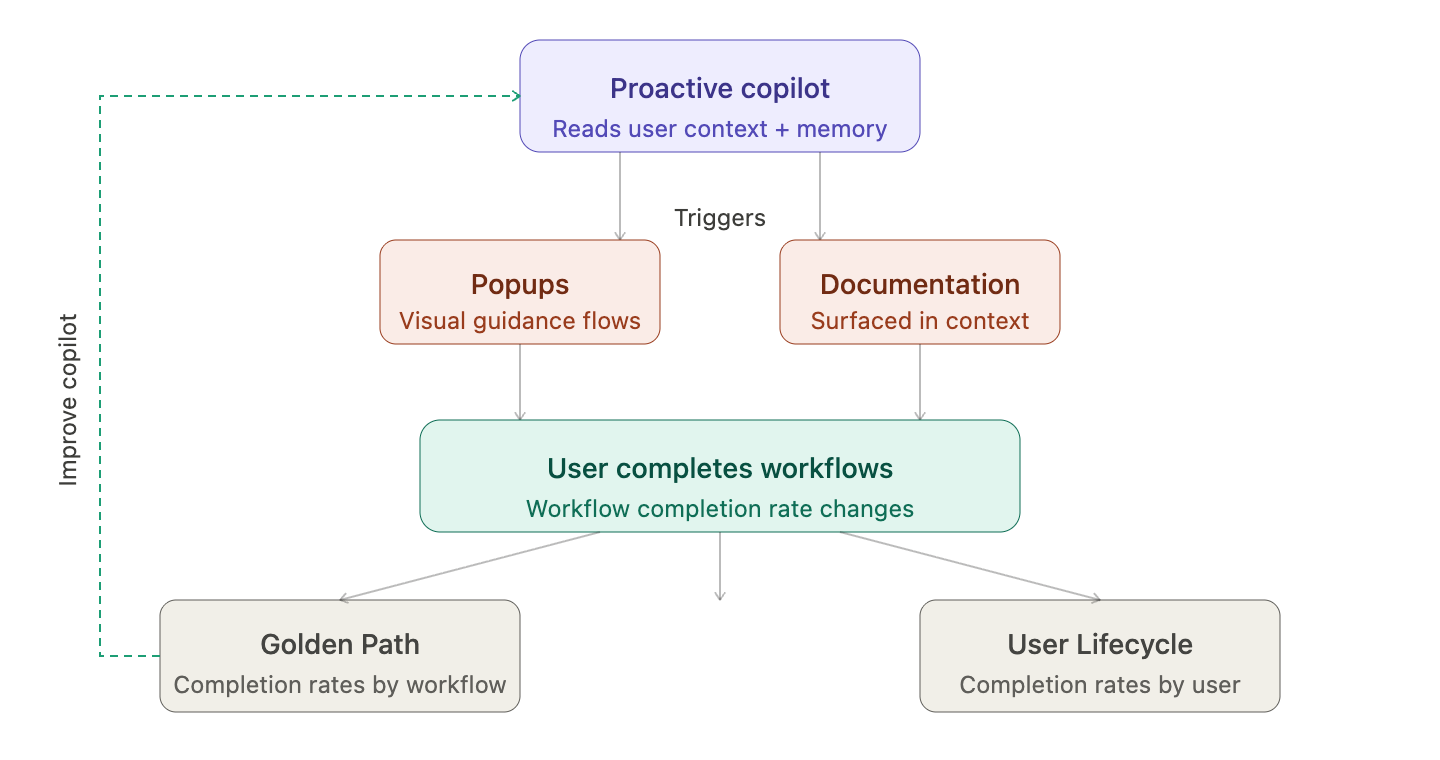

Building a copilot is only half the job — you need to know if it's actually working and improving adoption among your users. In this system, your documentation and popups are the outputs your copilot triggers. Evaluation means making sure those outputs are always accurate, timely, and relevant to each user.

The primary signal to watch is simple: is workflow completion rate increasing over time? If users are completing more of your key workflows at a higher rate, your copilot is doing its job.

How to evaluate

You can track this at two levels:

- By workflow — go to Golden Path and review completion rates across your user base

- By user — filter Golden Path by user email, or navigate to User Lifecycle for an individual view

Coming soon: a side-by-side view of user interactions and copilot conversations. You'll be able to pull user memory and conversation history together to evaluate whether your copilot is responding with the right context at the right moment.

How to use filters

Two filters matter most:

- Product section — scope sessions to a specific area of your product

- Task filter + task-level completion rate — find users who have or haven't completed specific workflows, and at what rate